DATABRICKS-CERTIFIED-ASSOCIATE-DEVELOPER-FOR-APACHE-SPARK Exam Questions & Answers

Vendor: Databricks

Certifications: Databricks Certifications

Exam Code: DATABRICKS-CERTIFIED-ASSOCIATE-DEVELOPER-FOR-APACHE-SPARK

Exam Name: Databricks Certified Associate Developer for Apache Spark 3.0

Updated: May 31, 2026

Q&As: 180

Note: Product instant download. Please sign in and click My account to download your product.

The DATABRICKS-CERTIFIED-ASSOCIATE-DEVELOPER-FOR-APACHE-SPARK Questions & Answers covers all the knowledge points of the real exam. We update our product frequently so our customer can always have the latest version of the brain dumps. We provide our customers with the excellent 7x24 hours customer service. We have the most professional expert team to back up our grate quality products. If you still cannot make your decision on purchasing our product, please try our free demo.

Download Free Databricks DATABRICKS-CERTIFIED-ASSOCIATE-DEVELOPER-FOR-APACHE-SPARK Demo

Experience

Pass4itsure.com exam material in PDF version.

Simply submit your e-mail address below to get

started with our PDF real exam demo of your

Databricks DATABRICKS-CERTIFIED-ASSOCIATE-DEVELOPER-FOR-APACHE-SPARK exam.

![]() Instant download

Instant download

![]() Latest update demo according to real exam

Latest update demo according to real exam

* Our demo shows only a few questions from your selected exam for evaluating purposes

- Q&As Identical to the VCE Product

- Windows, Mac, Linux, Mobile Phone

- Printable PDF without Watermark

- Instant Download Access

- Download Free PDF Demo

- Includes 365 Days of Free Updates

VCE

- Q&As Identical to the PDF Product

- Windows Only

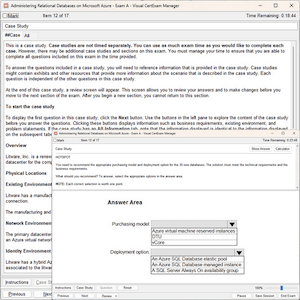

- Simulates a Real Exam Environment

- Review Test History and Performance

- Instant Download Access

- Includes 365 Days of Free Updates

Printable PDF

Printable PDF